Introduction

Ixa (Interactive eXecution of ABMs) is a Rust framework for building modular agent-based discrete event models for large-scale simulations. While its primary application is modeling disease transmission, its flexible design makes it suitable for a wide range of simulation scenarios.

Ixa is named after the Ixa crab, a genus of Indo-Pacific pebble crabs from the family Leucosiidae.

You are reading The Ixa Book, a tutorial introduction to Ixa. API documentation can be found at https://ixa.rs/doc/ixa.

This book assumes you have a basic familiarity with the command line and at least some experience with programming.

Get Started

If you are new to Rust, we suggest taking some time to learn the parts of Rust that are most useful for ixa development. We've compiled some resources in rust-resources.md.

Execute the following commands to create a new Rust project called ixa_model.

cargo new --bin ixa_model

cd ixa_model

Use Ixa's new project setup script to setup the project for Ixa.

curl -s https://raw.githubusercontent.com/CDCgov/ixa/main/scripts/setup_new_ixa_project.sh | sh -s

Open src/main.rs in your favorite editor or IDE to verify the model looks like

the following:

use ixa::run_with_args;

fn main() {

run_with_args(|context, _, _| {

context.add_plan(1.0, |context| {

println!("The current time is {}", context.get_current_time());

});

Ok(())

})

.unwrap();

}To run the model:

cargo run

# The current time is 1

To run with logging enabled globally:

cargo run -- --log-level=trace

To run with logging enabled for just ixa_model:

cargo run -- --log-level ixa_model=trace

Command Line Usage

This document contains the help content for the ixa command-line program.

ixa

Default cli arguments for ixa runner

Usage: ixa [OPTIONS]

Options:

-

-r,--random-seed <RANDOM_SEED>— Random seedDefault value:

0 -

-c,--config— Optional path for a global properties config file -

-o,--output <OUTPUT_DIR>— Optional path for report output -

--prefix <FILE_PREFIX>— Optional prefix for report files -

-f,--force-overwrite— Overwrite existing report files? -

-l,--log-level <LOG_LEVEL>— Enable logging -

-v,--verbose— Increase logging verbosity (-v, -vv, -vvv, etc.)Level ERROR WARN INFO DEBUG TRACE Default ✓ -v ✓ ✓ ✓ -vv ✓ ✓ ✓ ✓ -vvv ✓ ✓ ✓ ✓ ✓ -

--warn— Set logging to WARN level. Shortcut for--log-level warn -

--debug— Set logging to DEBUG level. Shortcut for--log-level DEBUG -

--trace— Set logging to TRACE level. Shortcut for--log-level TRACE -

-t,--timeline-progress-max <TIMELINE_PROGRESS_MAX>— Enable the timeline progress bar with a maximum time -

--no-stats— Suppresses the printout of summary statistics at the end of the simulation

Your First Model

In this section we will get acquainted with the basic features of Ixa by implementing a simple infectious disease transmission model. This section is just a starting point. It is not intended to be:

- An introduction to the Rust programming language (or crash course) or software engineering topics like source control with Git

- A tutorial on using a Unix-flavored command line

- An overview or survey of either disease modeling or agent-based modeling

- An exhaustive treatment of all of the features of Ixa

Our Abstract Model

We introduce modeling in Ixa by implementing a simple model for a food-borne illness where infection events follow a Poisson process. We assume that each susceptible person has a constant risk of becoming infected over time, independent of other infections.

this is not the typical sir model

While this model has susceptible, infected, and recovered disease states, it is different from the canonical "SIR" model. In this model, the risk of infection does not depend on the prevalence of infected persons. Put another way, the people in the model can become infected but they are not infectious.

In this model, each individual susceptible person has an exponentially distributed time until they are infected. The rate of infections is referred to as the force of infection, and the mean time to infection for each individual is the inverse of the force of infection. (It follows that the time between successive infection events is also exponentially distributed.)

Infected individuals subsequently recover and cannot be re-infected. The times to recovery are exponentially distributed.

High-level view of how Ixa functions

This diagram gives a high-level view of how Ixa works:

Don't expect to understand everything in this diagram straight away. The major concepts we need to understand about models in Ixa are:

Context: AContextkeeps track of the state of the world for our model and is the primary way code interacts with anything in the running model.- Timeline: A future event list of the simulation, the timeline is a queue

of

Callbackobjects, called plans, that will assume control of theContextat a future point in time and execute the logic in the plan. - Plan: A piece of logic scheduled to execute at a certain time on the

timeline. Plans are added to the timeline through the

Context. - Entities: Generally people in a disease model, the entities in the model dynamically interact over the course of the simulation. Data can be associated with entities as properties.

- Property: Data attached to an entity. In our case, we have people properties.

- Module: An organizational unit of functionality. Simulations are

constructed out of a series of interacting modules that take turns

manipulating the

Contextthrough a mutable reference. Modules store data in the simulation using theDataPlugintrait that allows them to retrieve data by type. - Event: Modules can also emit events that other modules can subscribe to handle by event type. This allows modules to broadcast that specific things have occurred and have other modules take turns reacting to these occurrences. An example of an event might be a person becoming infected by a disease.

tip

The term "agent" is sometimes used as a synonym for "entity."

The organization of a model's implementation

A model in Ixa is a computer program written in the Rust programming language that uses the Ixa library (or "crate" in the language of Rust). A model is organized into of a set of modules that work together to provide all of the functions of the simulation. For instance, a simple disease transmission model might consist of the following modules:

- A population loader that initializes the set of people represented by the simulation.

- A transmission manager that models the process of how a susceptible person in the population becomes infected.

- An infection manager that transitions infected people through stages of disease until recovery.

- A reporting module that records data about how the disease evolves through the population to a file for later analysis.

The single responsibility principle in software engineering is a key idea behind modularity. It states that each module should have one clear purpose or responsibility. By designing each module to perform a single task (for example, loading the population data, managing the transmission of the disease, or handling infection progression), you create a system where each part is easier to understand, test, and maintain. This not only helps prevent errors but also allows us to iterate and improve each component independently.

In the context of our disease transmission model:

- The population loader is solely responsible for setting up the initial state of the simulation by importing and structuring the data about people.

- The transmission manager focuses exclusively on modeling the process by which persons get infected.

- The infection manager takes care of the progression of the disease within an infected individual until recovery.

- The reporting module handles data collection and output, ensuring that results are recorded accurately.

By organizing the model into these distinct modules, each with a single responsibility, we ensure that our simulation remains organized and manageable—even as the complexity of the model grows.

The rest of this chapter develops each of the modules of our model one-by-one.

Setting Up Your First Model

Create a new project with Cargo

Let's setup the bare bones skeleton of our first model. First decide where your

Ixa-related code is going to live on your computer. On my computer, that's the

Code directory in my home folder (or ~ for short). I will use my directory

structure for illustration purposes in this section. Just modify the commands

for wherever you chose to store your models.

Navigate to the directory you have chosen for your models and then use Cargoto

initialize a new Rust project called disease_model.

cd ~/Code

cargo new --bin disease_model

Cargo creates a directory named disease_model with a project skeleton for us.

Open the newly created disease_model directory in your favorite IDE, like

VSCode (free) or

RustRover.

🏠 home/

└── 🗂️ Code/

└── 🗂️ disease_model/

├── 🗂️ src/

│ └── 📄 main.rs

├── .gitignore

└── 📄 Cargo.toml

source control

The .gitignore file lists all the files and directories you don't want to

include in source control. For a Rust project you should at least have

target and Cargo.lock listed in the .gitignore. I also make a habit of

listing .vscode and .idea, the directories VS Code and JetBrains

respectively store IDE project settings.

cargo

Cargo is Rust's package manager and build system. It is a single tool that

plays the role of the multiple different tools you would use in other

languages, such as pip and poetry in the Python ecosystem. We use Cargo to

- install tools like ripgrep (

cargo install) - initialize new projects (

cargo newandcargo init) - add new project dependencies (

cargo add serde) - update dependency versions (

cargo update) - check the project's code for errors (

cargo check) - download and build the correct dependencies with the correct feature flags

(

cargo build) - build the project's targets, including examples and tests (

cargo build) - generate documentation (

cargo doc) - run tests and benchmarks (

cargo test,cargo bench)

Setup Dependencies and Cargo.toml

Ixa comes with a convenience script for setting up new Ixa projects. Change

directory to disease_model/, the project root, and run this command.

curl -s https://raw.githubusercontent.com/CDCgov/ixa/main/scripts/setup_new_ixa_project.sh | sh -s

The script adds the Ixa library as a project dependency and provides you with a

minimal Ixa program in src/main.rs.

Dependencies

We will depend on a few external libraries in addition to Ixa. The cargo add

command makes this easy.

cargo add csv rand_distr serde

Cargo.toml

Cargo stores information about these dependencies in the Cargo.toml file. This

file also stores metadata about your project used when publishing your project

to Crates.io. Even though we won't be publishing the crate to Crates.io, it's a

good idea to get into the habit of adding at least the author(s) and a brief

description of the project.

# Cargo.toml

[package]

name = "disease_model"

description = "A basic disease model using the Ixa agent-based modeling framework"

authors = ["John Doe <jdoe@example.com>"]

version = "0.1.0"

edition = "2024"

publish = false # Do not publish to the Crates.io registry

[dependencies]

csv = "1.3.1"

ixa = { git = "https://github.com/CDCgov/ixa", branch = "main" }

rand_distr = "0.5.1"

serde = { version = "1.0.217", features = ["derive"] }

Executing the Ixa model

We are almost ready to execute our first model. Edit src/main.rs to look like

this:

// main.rs

use ixa::run_with_args;

fn main() {

run_with_args(|context, _, _| {

context.add_plan(1.0, |context| {

println!("The current time is {}", context.get_current_time());

});

Ok(())

})

.unwrap();

}Don't let this code intimidate you—it's really quite simple. The first line says

we want to use symbols from the ixa library in the code that follows. In

main(), the first thing we do is call run_with_args(). The run_with_args()

function takes as an argument a closure inside which we can do additional setup

before the simulation is kicked off if necessary. The only setup we do is

schedule a plan at time 1.0. The plan is itself another closure that prints the

current simulation time.

closures

A closure is a small, self-contained block of code that can be passed around and executed later. It can capture and use variables from its surrounding environment, which makes it useful for things like callbacks, event handlers, or any situation where you want to define some logic on the fly and run it at a later time. In simple terms, a closure is like a mini anonymous function.

The run_with_args() function does the following:

-

It sets up a

Contextobject for us, parsing and applying any command line arguments and initializing subsystems accordingly. AContextkeeps track of the state of the world for our model and is the primary way code interacts with anything in the running model. -

It executes our closure, passing it a mutable reference to

contextso we can do any additional setup. -

Finally, it kicks off the simulation by executing

context.execute(). Of course, our model doesn't actually do anything or even contain any data, socontext.execute()checks that there is no work to do and immediately returns.

If there is an error at any stage, run_with_args() will return an error

result. The Rust compiler will complain if we do not handle the returned result,

either by checking for the error or explicitly opting out of the check, which

encourages us to do the responsible thing: match result checks for the error.

We can build and run our model from the command line using Cargo:

cargo run

Enabling Logging

The model doesn't do anything yet—it doesn't even emit the log messages we included. We can turn those on to see what is happening inside our model during development with the following command line argument:

cargo run -- --log-level trace

This turns on messages emitted by Ixa itself, too. If you only want to see

messages emitted by disease_model, you can specify the module in addition to

the log level:

cargo run -- --log-level disease_model=trace

logging

The trace!, info!, and error! logging macros allow us to print messages

to the console, but they are much more powerful than a simple print statement.

With log messages, you can:

- Turn log messages on and off as needed.

- Enable only messages with a specified priority (for example, only warnings or higher).

- Filter messages to show only those emitted from a specific module, like the

peoplemodule we write in the next section.

See the logging documentation for more details.

command line arguments

The run_with_args() function takes care of handling any command line

arguments for us, which is why we don't just create a Context object and

call context.execute() ourselves. There are many arguments we can pass to

our model that affect what is output and where, debugging options,

configuration input, and so forth. See the command line documentation for more

details.

In the next section we will add people to our model.

The People Module

In Ixa we organize our models into modules each of which is responsible for a single aspect of the model.

modules

In fact, the code of Ixa itself is organized into modules in just the same way models are.

Ixa is a framework for developing agent-based models. In most of our models,

the agents will represent people. So let's create a module that is responsible

for people and their properties—the data that is attached to each person. Create

a new file in the src directory called people.rs.

Defining an Entity and Property

// people.rs

use ixa::prelude::*;

use ixa::trace;

use crate::POPULATION;

define_entity!(Person);

define_property!(

// The type of the property

enum InfectionStatus {

S,

I,

R,

},

// The entity the property is associated with

Person,

// The property's default value for newly created `Person` entities

default_const = InfectionStatus::S

);

/// Populates the "world" with the `POPULATION` number of people.

pub fn init(context: &mut Context) {

trace!("Initializing people");

for _ in 0..POPULATION {

let _ = context.add_entity(Person).expect("failed to add person");

}

}We have to define the Person entity before we can associate properties with

it. The define_entity!(Person) macro invocation automatically defines the

Person type, implements the Entity trait for Person, and creates the type

alias PersonId = EntityId<Person>, which is the type we can use to represent

specific instances of our entity, a single person, in our simulation.

To each person we will associate a value of the enum (short for “enumeration”)

named InfectionStatus. An enum is a way to create a type that can be one of

several predefined values. Here, we have three values:

- S: Represents someone who is susceptible to infection.

- I: Represents someone who is currently infected.

- R: Represents someone who has recovered.

Each value in the enum corresponds to a stage in our simple model. The enum

value for a person's InfectionStatus property will refer to an individual’s

health status in our simulation.

The module's init() function

While not strictly enforced by Ixa, the general formula for an Ixa module is:

- "public" data types and functions

- "private" data types and functions

The init() function is how your module will insert any data into the context

and set up whatever initial conditions it requires before the simulation begins.

For our people module, the init() function just inserts people into the

Context.

// Populates the "world" with people.

pub fn init(context: &mut Context) {

trace!("Initializing people");

for _ in 0..100 {

let _ = context.add_entity(Person).expect("failed to add person");

}

}We use Person here to represent a new entity with all default property values–

our one and only Property was defined to have a default value of

InfectionStatus::S (susceptible), so no additional information is needed.

The .expect("failed to add person") method call handles the case where adding

a person could fail. We could intercept that failure if we wanted, but in this

simple case we will just let the program crash with a message about the reason:

"failed to add person".

The Context::add_entity method returns an entity ID wrapped in a Result,

which the expect method unwraps. We can use this ID if we need to refer to

this newly created person. Since we don't need it, we assign the value to the

special "don't care" variable _ (underscore), which just throws the value

away.

Constants

Having "magic numbers" embedded in your code, such as the constant 100 here

representing the total number of people in our model, is bad practice.

What if we want to change this value later? Will we even be able to find it in

all of our source code? Ixa has a formal mechanism for managing these kinds of

model parameters, but for now we will just define a "static constant" near the

top of src/main.rs named POPULATION and replace the literal 100 with

POPULATION:

use ixa::prelude::*;

use ixa::trace;

use crate::POPULATION;

define_entity!(Person);

define_property!(

// The type of the property

enum InfectionStatus {

S,

I,

R,

},

// The entity the property is associated with

Person,

// The property's default value for newly created `Person` entities

default_const = InfectionStatus::S

);

/// Populates the "world" with the `POPULATION` number of people.

pub fn init(context: &mut Context) {

trace!("Initializing people");

for _ in 0..POPULATION {

let _ = context.add_entity(Person).expect("failed to add person");

}

}Let's revisit src/main.rs:

// ANCHOR: header

mod incidence_report;

mod infection_manager;

mod people;

mod transmission_manager;

use ixa::{error, info, run_with_args, Context};

static POPULATION: u64 = 100;

static FORCE_OF_INFECTION: f64 = 0.1;

static INFECTION_DURATION: f64 = 10.0;

static MAX_TIME: f64 = 200.0;

// ANCHOR_END: header

fn main() {

let result = run_with_args(|context: &mut Context, _args, _| {

// Add a plan to shut down the simulation after `max_time`, regardless of

// what else is happening in the model.

context.add_plan(MAX_TIME, |context| {

context.shutdown();

});

people::init(context);

transmission_manager::init(context);

infection_manager::init(context);

incidence_report::init(context).expect("Failed to init incidence report");

Ok(())

});

match result {

Ok(_) => {

info!("Simulation finished executing");

}

Err(e) => {

error!("Simulation exited with error: {}", e);

}

}

}- Your IDE might have added the

mod people;line for you. If not, add it now. It tells the compiler that thepeoplemodule is attached to themainmodule (that is,main.rs). - We also need to declare our static constant for the total number of people.

- We need to initialize the people module.

Imports

Turning back to src/people.rs, your IDE might have been complaining to you

about not being able to find things "in this scope"—or, if you are lucky, your

IDE was smart enough to import the symbols you need at the top of the file

automatically. The issue is that the compiler needs to know where externally

defined items are coming from, so we need to have use statements at the top of

the file to import those items. Here is the complete src/people.rs file:

use ixa::prelude::*;

use ixa::trace;

use crate::POPULATION;

// ANCHOR: define_property

define_entity!(Person);

define_property!(

// The type of the property

enum InfectionStatus {

S,

I,

R,

},

// The entity the property is associated with

Person,

// The property's default value for newly created `Person` entities

default_const = InfectionStatus::S

);

// ANCHOR_END: define_property

// ANCHOR: init

/// Populates the "world" with the `POPULATION` number of people.

pub fn init(context: &mut Context) {

trace!("Initializing people");

for _ in 0..POPULATION {

let _ = context.add_entity(Person).expect("failed to add person");

}

}

// ANCHOR_END: initThe Transmission Manager

We call the module in charge of initiating new infections the transmission

manager. Create the file src/transmission_manager.rs and add

mod transmission_manager; to the top of src/main.rs right next to the

mod people; statement. We need to flesh out this skeleton.

// transmission_manager.rs

use ixa::Context;

fn attempt_infection(context: &mut Context) {

// attempt an infection...

}

pub fn init(context: &mut Context) {

trace!("Initializing transmission manager");

// initialize the transmission manager...

}Constants

Recall our abstract model: We assume that each susceptible person has a constant risk of becoming infected over time, independent of past infections, expressed as a force of infection.

There are at least three ways to implement this model:

- At the start of the simulation, schedule each person's infection. This approach is possible because, in this model, everyone will eventually be infected, and all infections occur independently of one another.

- At the start of the simulation, schedule a single infection. When that infection occurs, schedule the next infection. If, for each susceptible person, the time to infection is exponentially distributed, then the time until the next infection of any susceptible person in the simulation is also exponentially distributed, with a rate equal to the force of infection times the number of susceptibles. Upon any one infection, we select the next infectee at random from the remaining susceptibles and schedule their infection.

- Schedule infection attempts, occurring at a rate equal to the force of infection times the total number of people. Upon any one infection attempt, we check if the attempted infectee is susceptible, and, if so, infect them. We then select the next attempted infectee at random from the entire population, and schedule their attempted infection. Infection attempts occur at a rate equal to the force of infection times the total number of people.

These three approaches are mathematically equivalent. Here we demonstrate the third approach because it is the simplest to implement in ixa.

We have already dealt with constants when we defined the constant POPULATION

in main.rs. Let's define FORCE_OF_INFECTION right next to it. We also cap

the simulation time to an arbitrarily large number, a good practice that

prevents the simulation from running forever in case we make a programming

error.

// main.rs

mod incidence_report;

mod infection_manager;

mod people;

mod transmission_manager;

use ixa::{error, info, run_with_args, Context};

static POPULATION: u64 = 100;

static FORCE_OF_INFECTION: f64 = 0.1;

static INFECTION_DURATION: f64 = 10.0;

static MAX_TIME: f64 = 200.0;

fn main() {

let result = run_with_args(|context: &mut Context, _args, _| {

// Add a plan to shut down the simulation after `max_time`, regardless of

// what else is happening in the model.

context.add_plan(MAX_TIME, |context| {

context.shutdown();

});

people::init(context);

transmission_manager::init(context);

infection_manager::init(context);

incidence_report::init(context).expect("Failed to init incidence report");

Ok(())

});

match result {

Ok(_) => {

info!("Simulation finished executing");

}

Err(e) => {

error!("Simulation exited with error: {}", e);

}

}

}

// ...the rest of the file...Infection Attempts

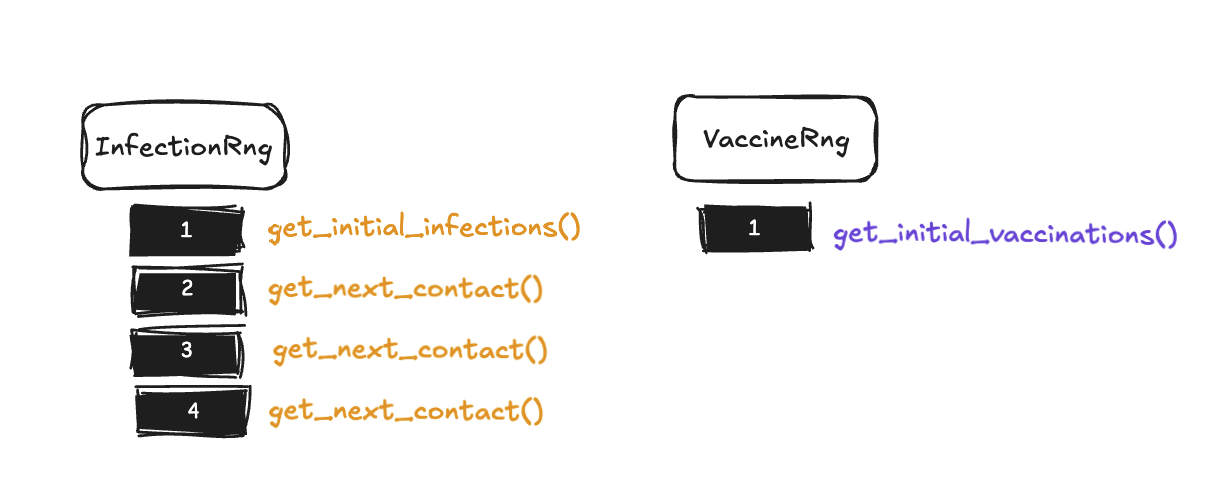

We need to import these constants into transmission_manager. To define a new

random number source in Ixa, we use define_rng!. There are other symbols from

Ixa we will need for the implementation of attempt_infection(). You can have

your IDE add these imports for you as you go, or you can add them yourself now.

// transmission_manager.rs

use ixa::prelude::*;

use ixa::trace;

use rand_distr::Exp;

use crate::people::{InfectionStatus, Person};

use crate::{FORCE_OF_INFECTION, POPULATION};

define_rng!(TransmissionRng);

fn attempt_infection(context: &mut Context) {

trace!("Attempting infection");

let person_to_infect = context.sample_entity(TransmissionRng, Person).unwrap();

let person_status: InfectionStatus = context.get_property(person_to_infect);

if person_status == InfectionStatus::S {

context.set_property(person_to_infect, InfectionStatus::I);

}

let current_time = context.get_current_time();

let delay_to_next_attempt = context.sample_distr(

TransmissionRng,

Exp::new(FORCE_OF_INFECTION * POPULATION as f64).unwrap(),

);

let next_attempt_time = current_time + delay_to_next_attempt;

context.add_plan(next_attempt_time, attempt_infection);

}

pub fn init(context: &mut Context) {

trace!("Initializing transmission manager");

context.add_plan(0.0, attempt_infection);

}

// ...the rest of the file...The function attempt_infection() needs to do the following:

- Randomly sample a person from the population to attempt to infect.

- Check the sampled person's current

InfectionStatus, changing it to infected (InfectionStatus::I) if and only if the person is currently susceptible (InfectionStatus::S). - Schedule the next infection attempt by inserting a plan into the timeline

that will run

attempt_infection()again.

use ixa::prelude::*;

use ixa::trace;

use rand_distr::Exp;

use crate::people::{InfectionStatus, Person};

use crate::{FORCE_OF_INFECTION, POPULATION};

define_rng!(TransmissionRng);

fn attempt_infection(context: &mut Context) {

trace!("Attempting infection");

let person_to_infect = context.sample_entity(TransmissionRng, Person).unwrap();

let person_status: InfectionStatus = context.get_property(person_to_infect);

if person_status == InfectionStatus::S {

context.set_property(person_to_infect, InfectionStatus::I);

}

let current_time = context.get_current_time();

let delay_to_next_attempt = context.sample_distr(

TransmissionRng,

Exp::new(FORCE_OF_INFECTION * POPULATION as f64).unwrap(),

);

let next_attempt_time = current_time + delay_to_next_attempt;

context.add_plan(next_attempt_time, attempt_infection);

}

pub fn init(context: &mut Context) {

trace!("Initializing transmission manager");

context.add_plan(0.0, attempt_infection);

}Read through this implementation and make sure you understand how it accomplishes the three tasks above. A few observations:

- The method call

context.sample_entity(TransmissionRng, Person)takes the name of a random number source and a query and returns anOption<PersonId>, which can have the value ofSome(PersonId)orNone. In this case, we usePersonand no property filters, which means we want to sample from the entire population. If we wanted to, we could pass filters with thewith!macro (e.g.,with!(Person, Region("California"))) The population will never be empty, so the result will never beNone, and so we just callunwrap()on theSome(PersonId)value to get thePersonId. - If the sampled person is not susceptible, then the only thing this function does is schedule the next attempt at infection.

- The time at which the next attempt is scheduled is sampled randomly from the

exponential distribution according to our abstract model and using the random

number source

TransmissionRngthat we defined specifically for this purpose. - None of this code refers to the people module (except to import the types

InfectionStatusandPersonId) or the infection manager we are about to write, demonstrating the software engineering principle of modularity.

random number generators

Each module generally defines its own random number source with define_rng!,

avoiding interfering with the random number sources used elsewhere in the

simulation in order to preserve determinism. In Monte Carlo simulations,

deterministic pseudorandom number sequences are desirable because they

ensure reproducibility, improve efficiency, provide control over randomness,

enable consistent statistical testing, and reduce the likelihood of bias or

error. These qualities are critical in scientific computing, optimization

problems, and simulations that require precise and verifiable results.

The Infection Manager

The infection manager (infection_manager.rs) is responsible for the evolution

of an infected person after they have been infected. In this simple model, there

is only one thing for the infection manager to do: schedule the time an infected

person recovers. We've already seen how to change a person's InfectionStatus

property and how to schedule plans on the timeline in the transmission module.

But how does the infection manager know about new infections?

Events

Modules can subscribe to events. The infection manager registers a function with Ixa that will be called in response to a change in a particular property.

// in infection_manager.rs

use ixa::prelude::*;

use rand_distr::Exp;

use crate::INFECTION_DURATION;

use crate::people::{InfectionStatus, Person, PersonId};

pub type InfectionStatusEvent = PropertyChangeEvent<Person, InfectionStatus>;

define_rng!(InfectionRng);

fn schedule_recovery(context: &mut Context, person_id: PersonId) {

trace!("Scheduling recovery");

let current_time = context.get_current_time();

let sampled_infection_duration =

context.sample_distr(InfectionRng, Exp::new(1.0 / INFECTION_DURATION).unwrap());

let recovery_time = current_time + sampled_infection_duration;

context.add_plan(recovery_time, move |context| {

context.set_property(person_id, InfectionStatus::R);

});

}

fn handle_infection_status_change(context: &mut Context, event: InfectionStatusEvent) {

trace!(

"Handling infection status change from {:?} to {:?} for {:?}",

event.previous, event.current, event.entity_id

);

if event.current == InfectionStatus::I {

schedule_recovery(context, event.entity_id);

}

}

pub fn init(context: &mut Context) {

trace!("Initializing infection_manager");

context.subscribe_to_event::<InfectionStatusEvent>(handle_infection_status_change);

}This line isn't defining a new struct or even a new type. Rather, it defines an

alias for PropertyChangeEvent<E: Entity, P: Property<E>> with the generic

types instantiated for the property we want to monitor, InfectionStatus. This

is effectively the name of the event we subscribe to in the module's init()

function:

// in infection_manager.rs

use ixa::prelude::*;

use rand_distr::Exp;

use crate::INFECTION_DURATION;

use crate::people::{InfectionStatus, Person, PersonId};

pub type InfectionStatusEvent = PropertyChangeEvent<Person, InfectionStatus>;

define_rng!(InfectionRng);

fn schedule_recovery(context: &mut Context, person_id: PersonId) {

trace!("Scheduling recovery");

let current_time = context.get_current_time();

let sampled_infection_duration =

context.sample_distr(InfectionRng, Exp::new(1.0 / INFECTION_DURATION).unwrap());

let recovery_time = current_time + sampled_infection_duration;

context.add_plan(recovery_time, move |context| {

context.set_property(person_id, InfectionStatus::R);

});

}

fn handle_infection_status_change(context: &mut Context, event: InfectionStatusEvent) {

trace!(

"Handling infection status change from {:?} to {:?} for {:?}",

event.previous, event.current, event.entity_id

);

if event.current == InfectionStatus::I {

schedule_recovery(context, event.entity_id);

}

}

pub fn init(context: &mut Context) {

trace!("Initializing infection_manager");

context.subscribe_to_event::<InfectionStatusEvent>(handle_infection_status_change);

}The event handler is just a regular Rust function that takes a Context and an

InfectionStatusEvent, the latter of which holds the PersonId of the person

whose InfectionStatus changed, the current InfectionStatus value, and the

previous InfectionStatus value.

// in infection_manager.rs

use ixa::prelude::*;

use rand_distr::Exp;

use crate::INFECTION_DURATION;

use crate::people::{InfectionStatus, Person, PersonId};

pub type InfectionStatusEvent = PropertyChangeEvent<Person, InfectionStatus>;

define_rng!(InfectionRng);

fn schedule_recovery(context: &mut Context, person_id: PersonId) {

trace!("Scheduling recovery");

let current_time = context.get_current_time();

let sampled_infection_duration =

context.sample_distr(InfectionRng, Exp::new(1.0 / INFECTION_DURATION).unwrap());

let recovery_time = current_time + sampled_infection_duration;

context.add_plan(recovery_time, move |context| {

context.set_property(person_id, InfectionStatus::R);

});

}

fn handle_infection_status_change(context: &mut Context, event: InfectionStatusEvent) {

trace!(

"Handling infection status change from {:?} to {:?} for {:?}",

event.previous, event.current, event.entity_id

);

if event.current == InfectionStatus::I {

schedule_recovery(context, event.entity_id);

}

}

pub fn init(context: &mut Context) {

trace!("Initializing infection_manager");

context.subscribe_to_event::<InfectionStatusEvent>(handle_infection_status_change);

}We only care about new infections in this model.

Scheduling Recovery

As in attempt_infection(), we sample the recovery time from the exponential

distribution with mean INFECTION_DURATION, a constant we define in main.rs.

We define a random number source for this module's exclusive use with

define_rng!(InfectionRng) as we did before.

use ixa::prelude::*;

use rand_distr::Exp;

use crate::INFECTION_DURATION;

use crate::people::{InfectionStatus, Person, PersonId};

pub type InfectionStatusEvent = PropertyChangeEvent<Person, InfectionStatus>;

define_rng!(InfectionRng);

fn schedule_recovery(context: &mut Context, person_id: PersonId) {

trace!("Scheduling recovery");

let current_time = context.get_current_time();

let sampled_infection_duration =

context.sample_distr(InfectionRng, Exp::new(1.0 / INFECTION_DURATION).unwrap());

let recovery_time = current_time + sampled_infection_duration;

context.add_plan(recovery_time, move |context| {

context.set_property(person_id, InfectionStatus::R);

});

}

fn handle_infection_status_change(context: &mut Context, event: InfectionStatusEvent) {

trace!(

"Handling infection status change from {:?} to {:?} for {:?}",

event.previous, event.current, event.entity_id

);

if event.current == InfectionStatus::I {

schedule_recovery(context, event.entity_id);

}

}

pub fn init(context: &mut Context) {

trace!("Initializing infection_manager");

context.subscribe_to_event::<InfectionStatusEvent>(handle_infection_status_change);

}Notice that the plan is again just a Rust function, but this time it takes the form of a closure rather than a traditionally defined function. This is convenient when the function is only a line or two.

closures and captured variables

The move keyword in the syntax for Rust closures instructs the closure to

take ownership of any variables it uses from its surrounding context—these are

known as captured variables. Normally, when a closure refers to variables

defined outside of its own body, it borrows them, which means it uses

references to those values. However, with move, the closure takes full

ownership by moving the variables into its own scope. This is especially

useful when the closure must outlive the current scope or be passed to another

thread, as it ensures that the closure has its own independent copy of the

data without relying on references that might become invalid.

The Incidence Reporter

An agent-based model does not output an answer at the end of a simulation in the usual sense. Rather, the simulation evolves the state of the world over time. If we want to track that evolution for later analysis, it is up to us to collect the data we want to have. The built-in report feature makes it easy to record data to a CSV file during the simulation.

Our model will only have a single report that records the current in-simulation

time, the PersonId, and the InfectionStatus of a person whenever their

InfectionStatus changes. We define a struct representing a single row of data.

// in incidence_report.rs

use std::path::PathBuf;

use ixa::prelude::*;

use ixa::trace;

use serde::Serialize;

use crate::infection_manager::InfectionStatusEvent;

use crate::people::{InfectionStatus, PersonId};

#[derive(Serialize, Clone)]

struct IncidenceReportItem {

time: f64,

person_id: PersonId,

infection_status: InfectionStatus,

}

define_report!(IncidenceReportItem);

fn handle_infection_status_change(context: &mut Context, event: InfectionStatusEvent) {

trace!(

"Recording infection status change from {:?} to {:?} for {:?}",

event.previous, event.current, event.entity_id

);

context.send_report(IncidenceReportItem {

time: context.get_current_time(),

person_id: event.entity_id,

infection_status: event.current,

});

}

pub fn init(context: &mut Context) -> Result<(), IxaError> {

trace!("Initializing incidence_report");

// Output directory is relative to the directory with the Cargo.toml file.

let output_path = PathBuf::from(env!("CARGO_MANIFEST_DIR"));

// In the configuration of report options below, we set `overwrite(true)`, which is not

// recommended for production code in order to prevent accidental data loss. It is set

// here so that newcomers won't have to deal with a confusing error while running

// examples.

context

.report_options()

.directory(output_path)

.overwrite(true);

context.add_report::<IncidenceReportItem>("incidence")?;

context.subscribe_to_event::<InfectionStatusEvent>(handle_infection_status_change);

Ok(())

}The fact that IncidenceReportItem derives Serialize is what makes this magic

work. We define a report for this struct using the define_report! macro.

use std::path::PathBuf;

use ixa::prelude::*;

use ixa::trace;

use serde::Serialize;

use crate::infection_manager::InfectionStatusEvent;

use crate::people::{InfectionStatus, PersonId};

#[derive(Serialize, Clone)]

struct IncidenceReportItem {

time: f64,

person_id: PersonId,

infection_status: InfectionStatus,

}

define_report!(IncidenceReportItem);

fn handle_infection_status_change(context: &mut Context, event: InfectionStatusEvent) {

trace!(

"Recording infection status change from {:?} to {:?} for {:?}",

event.previous, event.current, event.entity_id

);

context.send_report(IncidenceReportItem {

time: context.get_current_time(),

person_id: event.entity_id,

infection_status: event.current,

});

}

pub fn init(context: &mut Context) -> Result<(), IxaError> {

trace!("Initializing incidence_report");

// Output directory is relative to the directory with the Cargo.toml file.

let output_path = PathBuf::from(env!("CARGO_MANIFEST_DIR"));

// In the configuration of report options below, we set `overwrite(true)`, which is not

// recommended for production code in order to prevent accidental data loss. It is set

// here so that newcomers won't have to deal with a confusing error while running

// examples.

context

.report_options()

.directory(output_path)

.overwrite(true);

context.add_report::<IncidenceReportItem>("incidence")?;

context.subscribe_to_event::<InfectionStatusEvent>(handle_infection_status_change);

Ok(())

}The way we listen to events is almost identical to how we did it in the

infection module. First let's make the event handler, that is, the callback

that will be called whenever an event is emitted.

use std::path::PathBuf;

use ixa::prelude::*;

use ixa::trace;

use serde::Serialize;

use crate::infection_manager::InfectionStatusEvent;

use crate::people::{InfectionStatus, PersonId};

#[derive(Serialize, Clone)]

struct IncidenceReportItem {

time: f64,

person_id: PersonId,

infection_status: InfectionStatus,

}

define_report!(IncidenceReportItem);

fn handle_infection_status_change(context: &mut Context, event: InfectionStatusEvent) {

trace!(

"Recording infection status change from {:?} to {:?} for {:?}",

event.previous, event.current, event.entity_id

);

context.send_report(IncidenceReportItem {

time: context.get_current_time(),

person_id: event.entity_id,

infection_status: event.current,

});

}

pub fn init(context: &mut Context) -> Result<(), IxaError> {

trace!("Initializing incidence_report");

// Output directory is relative to the directory with the Cargo.toml file.

let output_path = PathBuf::from(env!("CARGO_MANIFEST_DIR"));

// In the configuration of report options below, we set `overwrite(true)`, which is not

// recommended for production code in order to prevent accidental data loss. It is set

// here so that newcomers won't have to deal with a confusing error while running

// examples.

context

.report_options()

.directory(output_path)

.overwrite(true);

context.add_report::<IncidenceReportItem>("incidence")?;

context.subscribe_to_event::<InfectionStatusEvent>(handle_infection_status_change);

Ok(())

}Just pass a IncidenceReportItem to context.send_report()! We also emit a

trace log message so we can trace the execution of our model.

In the init() function there is a little bit of setup needed. Also, we can't

forget to register this callback to listen to InfectionStatusEvents.

use std::path::PathBuf;

use ixa::prelude::*;

use ixa::trace;

use serde::Serialize;

use crate::infection_manager::InfectionStatusEvent;

use crate::people::{InfectionStatus, PersonId};

#[derive(Serialize, Clone)]

struct IncidenceReportItem {

time: f64,

person_id: PersonId,

infection_status: InfectionStatus,

}

define_report!(IncidenceReportItem);

fn handle_infection_status_change(context: &mut Context, event: InfectionStatusEvent) {

trace!(

"Recording infection status change from {:?} to {:?} for {:?}",

event.previous, event.current, event.entity_id

);

context.send_report(IncidenceReportItem {

time: context.get_current_time(),

person_id: event.entity_id,

infection_status: event.current,

});

}

pub fn init(context: &mut Context) -> Result<(), IxaError> {

trace!("Initializing incidence_report");

// Output directory is relative to the directory with the Cargo.toml file.

let output_path = PathBuf::from(env!("CARGO_MANIFEST_DIR"));

// In the configuration of report options below, we set `overwrite(true)`, which is not

// recommended for production code in order to prevent accidental data loss. It is set

// here so that newcomers won't have to deal with a confusing error while running

// examples.

context

.report_options()

.directory(output_path)

.overwrite(true);

context.add_report::<IncidenceReportItem>("incidence")?;

context.subscribe_to_event::<InfectionStatusEvent>(handle_infection_status_change);

Ok(())

}Note that:

- the configuration you do on

context.report_options()applies to all reports attached to that context; - using

overwrite(true)is useful for debugging but potentially devastating for production; - this

init()function returns a result, which will be whatever error thatcontext.add_report()returns if the CSV file cannot be created for some reason, orOk(())otherwise.

result and handling errors

The Rust Result<U, V> type is an enum used for error handling. It

represents a value that can either be a successful outcome (Ok) containing a

value of type U, or an error (Err) containing a value of type V. Think

of it as a built-in way to return and propagate errors without relying on

exceptions, similar to using “Either” types or special error codes in other

languages.

The ? operator works with Result to simplify error handling. When you

append ? to a function call that returns a Result, it automatically checks

if the result is an Ok or an Err. If it’s Ok, the value is extracted; if

it’s an Err, the error is immediately returned from the enclosing function.

This helps keep your code concise and easy to read by reducing the need for

explicit error-checking logic.

If your IDE isn't capable of adding imports for you, the external symbols we need for this module are as follows.

use std::path::PathBuf;

use ixa::prelude::*;

use ixa::trace;

use serde::Serialize;

use crate::infection_manager::InfectionStatusEvent;

use crate::people::{InfectionStatus, PersonId};

#[derive(Serialize, Clone)]

struct IncidenceReportItem {

time: f64,

person_id: PersonId,

infection_status: InfectionStatus,

}

define_report!(IncidenceReportItem);

fn handle_infection_status_change(context: &mut Context, event: InfectionStatusEvent) {

trace!(

"Recording infection status change from {:?} to {:?} for {:?}",

event.previous, event.current, event.entity_id

);

context.send_report(IncidenceReportItem {

time: context.get_current_time(),

person_id: event.entity_id,

infection_status: event.current,

});

}

pub fn init(context: &mut Context) -> Result<(), IxaError> {

trace!("Initializing incidence_report");

// Output directory is relative to the directory with the Cargo.toml file.

let output_path = PathBuf::from(env!("CARGO_MANIFEST_DIR"));

// In the configuration of report options below, we set `overwrite(true)`, which is not

// recommended for production code in order to prevent accidental data loss. It is set

// here so that newcomers won't have to deal with a confusing error while running

// examples.

context

.report_options()

.directory(output_path)

.overwrite(true);

context.add_report::<IncidenceReportItem>("incidence")?;

context.subscribe_to_event::<InfectionStatusEvent>(handle_infection_status_change);

Ok(())

}Next Steps

We have created several new modules. We need to make sure they are each

initialized with the Context before the simulation starts. Below is main.rs

in its entirety.

// main.rs

// ANCHOR: header

mod incidence_report;

mod infection_manager;

mod people;

mod transmission_manager;

use ixa::{error, info, run_with_args, Context};

static POPULATION: u64 = 100;

static FORCE_OF_INFECTION: f64 = 0.1;

static INFECTION_DURATION: f64 = 10.0;

static MAX_TIME: f64 = 200.0;

// ANCHOR_END: header

fn main() {

let result = run_with_args(|context: &mut Context, _args, _| {

// Add a plan to shut down the simulation after `max_time`, regardless of

// what else is happening in the model.

context.add_plan(MAX_TIME, |context| {

context.shutdown();

});

people::init(context);

transmission_manager::init(context);

infection_manager::init(context);

incidence_report::init(context).expect("Failed to init incidence report");

Ok(())

});

match result {

Ok(_) => {

info!("Simulation finished executing");

}

Err(e) => {

error!("Simulation exited with error: {}", e);

}

}

}Exercises

- Currently the simulation runs until

MAX_TIMEeven if every single person has been infected and has recovered. Add a check somewhere that callscontext.shutdown()if there is no more work for the simulation to do. Where should this check live? Hint: Usecontext.query_entity_count. - Analyze the data output by the incident reporter. Plot the number of people

with each

InfectionStatuson the same axis to see how they change over the course of the simulation. Are the curves what we expect to see given our abstract model? Hint: Remember this model has a fixed force of infection, unlike a typical SIR model. - Add another property that moderates the risk of infection of the individual.

(Imagine, for example, that some people wash their hands more frequently.)

Give a randomly sampled subpopulation that intervention and add a check to

the transmission module to see if the person that we are attempting to infect

has that property. Change the probability of infection accordingly.

Hint: You will probably need some new constants, a new person property, a new

random number generator, and the

Bernoullidistribution.

Topics

- Indexing

- Burn-in Periods and Negative Time

- Handling Errors

- Performance and Profiling

- Profiling Module

randommodule- Reports

Understanding Indexing in Ixa

Syntax and Best Practices

Syntax:

// For single property indexes

// Somewhere during the initialization of `context`:

context.index_property::<Person, Age>();

// For multi-indexes

// Where properties are defined:

define_multi_property!((Name, Age, Weight), Person);

// Somewhere during the initialization of `context`:

context.index_property::<Person, (Name, Age, Weight)>();Best practices:

- Index a property to improve performance of queries of that property.

- Create a multi-property index to improve performance of queries involving multiple properties.

- The cost of creating indexes is increased memory use, which can be significant for large populations. So it is best to only create indexes / multi-indexes that actually improve model performance.

- It may be best to call

context.index_property::<Entity, Propertyin the>() init()method of the module in which the property is defined, or you can put all of yourContext::index_propertycalls together in a main initialization function if you prefer. - It is not an error to call

Context::index_propertyin the middle of a running simulation or to call it twice for the same property. - Calling

Context::index_propertyenables indexing and catches the index up to the current population at the time of the call.

Property Value Storage in Ixa

To understand why some operations in Ixa are slow without an index, we need to understand how property data is stored internally and how an index provides Ixa an alternative view into that data.

In Ixa, each agent in a simulation—such as a person in a disease transmission model—is associated with a unique row of data. This data is stored in columnar form, meaning each property or field of a person (such as infection status, age, or household) is stored as its own column. This structure allows for fast and memory-efficient processing.

Let’s consider a simple example with two fields: PersonId and

InfectionStatus.

PersonId: a unique identifier for each individual, which is represented as an integer internally (e.g., 1001, 1002, 1003, …).InfectionStatus: a status value indicating whether the individual issusceptible,infected, orrecovered.

At a particular time during our simulation, we might have the following data:

PersonId | InfectionStatus |

|---|---|

| 0 | susceptible |

| 1 | infected |

| 2 | susceptible |

| 3 | recovered |

| 4 | susceptible |

| 5 | susceptible |

| 6 | infected |

| 7 | susceptible |

| 8 | infected |

| 9 | susceptible |

| 10 | recovered |

| 11 | infected |

| 12 | infected |

| 13 | infected |

| 14 | recovered |

In the default representation used by Ixa, each field is stored as a column.

Internally, however, PersonId is not stored explicitly as data. Instead, it

is implicitly defined by the row number in the columnar data structure. That

is:

- The row number acts as the unique index (

PersonId) for each individual. - The

InfectionStatusvalues are stored in a single contiguous array, where the entry at positionigives the status for the person withPersonIdequal toi.

In this default layout, accessing the infection status for a person is a simple array lookup, which is extremely fast and requires minimal memory overhead.

But suppose instead of looking up the infection status of a particular

PersonId, you wanted to look up which PersonId's were associated to a

particular infection status, say, infected. If the the property is not

indexed, Ixa has to scan through the entire column and collect all PersonId's

(row numbers) for which InfectionStatus has the value infected, and it has

to do this each and every time we run a query for that property. If we do this

frequently, all of this scanning can add up to quite a long time!

Property Index Structure

We could save a lot of time if we scanned through the InfectionStatus column

once, collected the PersonId 's for each InfectionStatus value, and just

reused this table each time we needed to do this lookup. That's all an index is!

The index for our example column of data:

InfectionStatus | List of PersonId 's |

|---|---|

susceptible | [0, 2, 4, 5, 7, 9] |

infected | [1, 6, 8, 11, 12, 13] |

recovered | [3, 10, 14] |

An index in Ixa is just a map between a property value and the list of all

PersonId's having that value. Now looking up the PersonId's for a given

property value is (almost) as fast as looking up the property value for a given

PersonId.

The Costs of Creating an Index

There are two costs you have to pay for indexing:

- The index needs to be maintained as the simulation evolves the state of the population. Every change to any person's infection status needs to be reflected in the index. While this operation is fast for a single update, it isn't instant, and the accumulation of millions of little updates to the index can add up to a real runtime cost.

- The index uses memory. In fact, it uses more memory than the original column

of data, because it has to store both the

InfectionStatusvalues (in our example) and thePersonIdvalues, while the original column only stores theInfectionStatus(thePersonId's were implicitly the row numbers).

creating vs. maintaining an index

Suspiciously missing from this list of costs is the initial cost of scanning through the property column to create the index in the first place, but actually whether you maintain the index from the very beginning or you index it all at once doesn't matter: the sum of all the small efforts to update the index every time a person is added is equal to the cost of creating the index from scratch for an existing set of data.

Usually scanning through the whole property column is so slow relative to maintaining an index that the extra computational cost of maintaining the index is completely dwarfed by the time savings, even for infrequently queried properties. In other words, in terms of running time, an index is almost always worth it. For smaller population sizes in particular, at worst you shouldn't see a meaningful slow-down.

Memory use is a different story. In a model with tens of millions of people and many properties, you might want to be more thoughtful about which properties you index, as memory use can reach into the gigabytes. While we are in an era where tens of gigabytes of RAM is commonplace in workstations, cloud computing costs and the selection of appropriate virtual machine sizes for experiments in production recommend that we have a feel for whether we really need the resources we are using.

a query might be the wrong tool for the job

Sometimes, the best way to address a slow query in your model isn’t to add indexes, but to remove the query entirely. A common scenario is when you want to report on some aggregate statistics, for example, the total number of people having each infectiousness status. It might be much better to just track the aggregate value directly than to run a query for it every time you want to write it to a report. As usual, when it comes to performance issues, measure your specific use case to know for sure what the best strategy is.

Multi Property Indexes

To speed up queries involving multiple properties, use a multi-property index

(or multi-index for short), which indexes multiple properties jointly.

Suppose we have the properties AgeGroup and InfectionStatus, and we want to

speed up queries of these two properties:

let query = with!(Person, AgeGroup(30), InfectionStatus::Susceptible);

let age_and_status = context.query_result_iterator(query); // BottleneckWe could index AgeGroup and InfectionStatus individually, but in this case

we can do even better with a multi-index, which treats the pairs of values

(AgeGroup, InfectionStatus) as if it were a single value. Such a multi-index

might look like this:

(AgeGroup, InfectionStatus) | PersonId's |

|---|---|

(10, susceptible) | [16, 27, 31] |

(10, infected) | [38] |

(10, recovered) | [18, 23, 29, 34, 39] |

(20, susceptible) | [12, 25, 26] |

(20, infected) | [2, 3, 9, 14, 17, 19, 28, 33] |

(20, recovered) | [13, 20, 22, 30, 37] |

(30, susceptible) | [0, 1, 11, 21] |

(30, infected) | [5, 6, 7, 10, 15, 24, 32] |

(30, recovered) | [4, 8, 35, 36] |

Ixa hides the boilerplate required for creating a multi-index with the macro

define_multi_property!:

define_multi_property!((AgeGroup, InfectionStatus), Person);Creating a multi-index does not automatically create indexes for each of the properties individually, but you can do so yourself if you wish, for example, if you had other single property queries you want to speed up.

The Benefits of Indexing - A Case Study

In the Ixa source repository you will find the births-deaths example in the

examples/ directory. You can build and run this example with the following

command:

cargo run --example births-death

Now let's edit the input.json file and change the population size to 1000:

{

"population": 1000,

"max_time": 780.0,

"seed": 123,

⋮

}

We can time how long the simulation takes to run with the time command. Here's

what the command and output look like on my machine:

$ time cargo run --example births-deaths

Finished `dev` profile [unoptimized + debuginfo] target(s) in 0.30s

Running `target/debug/examples/births-deaths`

cargo run --example births-deaths 362.55s user 1.69s system 99% cpu 6:06.35 total

For a population size of only 1000 it takes more than six minutes to run!

Let's index the InfectionStatus property. In

examples/births-deaths/src/lib.rs we add the following line somewhere in the

initialize() function:

context.index_property::<Person, InfectionStatus>();We also need to import InfectionStatus by putting

use crate::population_manager::InfectionStatus; near the top of the file. To

be fair, let's compile the example separately so we don't include the compile

time in the run time:

cargo build --example births-deaths

Now run it again:

$ time cargo run --example births-deaths

Finished `dev` profile [unoptimized + debuginfo] target(s) in 0.09s

Running `target/debug/examples/births-deaths`

cargo run --example births-deaths 5.79s user 0.07s system 97% cpu 5.990 total

From six minutes to six seconds! This kind of dramatic speedup is typical with indexes. It allows models that would otherwise struggle with a population size of 1000 to handle populations in the tens of millions.

Exercises:

- Even six seconds is an eternity for modern computer processors. Try to get this example to run with a population of 1000 in ~1 second*, two orders of magnitude faster than the unindexed version, by indexing other additional properties.

- Using only a single property index of

InfectionStatusand a single multi-index, get this example to run in ~0.5 seconds. This illustrates that it's better to index the right properties than to just index everything.

*Your timings will be different but should be roughly proportional to these.

Burn-in Periods and Negative Time

Syntax Summary

Burn-in is implemented by setting a negative start time and treating 0.0 as the beginning of the analysis window:

context.set_start_time(-d);Introduction

In many epidemiological and agent-based models, the state you care about at time 0.0 does not arise instantaneously.

Populations need time to stabilize. Households and partnerships need to form. Immunity must reflect prior exposure rather than arbitrary assignment. Latent infections may need to be seeded and allowed to evolve into a realistic distribution. In short, the model often needs to run before it begins.

A common but fragile solution is to run a separate “initialization” simulation, snapshot its state, and then start a second simulation for analysis. This approach complicates reproducibility and splits what is conceptually one model into multiple executions.

Ixa provides a simpler mechanism: treat burn-in as part of the same execution. Instead of resetting time or stitching simulations together, you allow the timeline to extend into negative values. You then designate 0.0 as the beginning of your analysis window.

Negative time is not a special execution mode in Ixa. It is simply earlier simulation time. The event queue, scheduling rules, and execution semantics are identical before and after 0.0. What may differ is your model logic. You may choose to disable transmission, suppress reporting, use alternate parameters, or run simplified dynamics during burn-in. These differences arise from your code, not from special treatment by the framework.

The Core Pattern

Burn-in in Ixa is implemented by extending the simulation timeline into negative values and treating 0.0 as the beginning of the analysis window. There is only one execution and one event queue; burn-in and the main simulation evolve on the same continuous timeline.

The core pattern is:

- Choose a burn-in duration (for example, 180 days).

- Set the simulation start time to the negative of that duration.

- Differentiate burn-in behavior from main-simulation behavior using one of the two methods below.

There are two standard approaches:

- Time-gated logic: behavior depends directly on the current time with

context.get_current_time() >= 0.0checks throughout the code. - Activation at

0.0: a plan scheduled at time0.0enables or modifies model state (for example, by turning on transmission, enabling interventions, or beginning data collection).

Both approaches operate on the same continuous timeline. The choice depends on whether you prefer localized time checks or a single activation event at 0.0. There is no automatic transition at 0.0. Any change in behavior must be implemented explicitly in your model.

1. Activation at 0.0

Schedule a plan at 0.0 that enables or modifies model behavior. For example, transmission, reporting, or interventions may be turned on at the boundary. This approach centralizes the transition logic in a single plan.

The following example burns in for 180 days and enables full model dynamics at time 0.0:

use ixa::prelude::*;

let mut context = Context::new();

// Burn in for 180 days before the "official" start.

context.set_start_time(-180.0);

// Optional: perform initialization at the start of burn-in.

context.add_plan(-180.0, |ctx| {

// Initialize or seed state here.

});

// Method 1: Activation at 0.0

context.add_plan_with_phase(

0.0,

|ctx| {

// Enable full dynamics, reporting, interventions, etc.

},

ExecutionPhase::First

);

context.execute();In this example we use context.add_plan_with_phase with ExecutionPhase::First instead of the usual context.add_plan so that the activation plan runs before any other plans that happen to be scheduled at time 0.0.

2. Time-Gated Logic in Model Code

Partition behavior directly by checking the current time:

fn transmission_step(context: &mut Context) {

if t < context.get_current_time() {

// Burn-in behavior

return;

}

// Main simulation behavior

}This approach makes the phase boundary explicit in the code where behavior occurs. The downside is that you might need to do this check in many different disparate places within model code.

Practical Considerations

Burn-in relies on the same scheduling rules as the rest of the simulation. The following constraints are important when working with negative time.

Set the Start Time Before Execution

context.set_start_time(...) must be called before context.execute() and may only be called once.

The start time may be set to an arbitrarily low number. When execution begins, the event queue advances directly to the earliest scheduled plan. If burn-in plans are scheduled stochastically, it may be useful to choose a sufficiently low start time such that the probability of scheduling a plan earlier than the start time is effectively zero.

Plans Cannot Be Scheduled Earlier Than the Effective Start Time

A plan cannot be scheduled earlier than the simulation’s effective current time. Before execution begins:

- If no start time is set, the earliest allowable plan time is

0.0. - If

start_time = s, the earliest allowable plan time iss.

For burn-in, this means you must set a negative start time before scheduling any negative-time plans.

Periodic Plans Begin at 0.0

add_periodic_plan_with_phase(...) schedules its first execution at 0.0, not at the simulation start time. This is often desirable: reporting or intervention logic naturally begins at the analysis boundary. However, periodic behavior will not automatically run during negative-time burn-in.

If periodic activity is required during burn-in, schedule the first execution manually at the desired negative time and reschedule from there.

Reports and Outputs Include Negative Timestamps

Outputs generated during burn-in will carry negative timestamps. This is usually intentional. If downstream analysis should begin at 0.0, filter during post-processing or guard reporting logic within the model.

Include Negative Time in Initialization Tests

If negative time is part of your model initialization strategy, unit tests that validate initialization behavior may also need to include negative-time execution. Tests that assume the model begins at 0.0 may otherwise miss burn-in effects.

0.0 Convention, Not a Reset

Time does not reset at 0.0. The event queue continues uninterrupted across the boundary. Any transition in behavior at 0.0 must be implemented explicitly in model logic or in a plan scheduled at that time.

Common Burn-in Designs

Burn-in is not limited to simple state initialization. In practice, it is used to execute a specialized variant of the model prior to the analysis window. Several patterns recur frequently.

Reduced or Modified Dynamics

During burn-in, some components of the model may operate differently:

- Transmission disabled while demography and recovery remain active.

- Alternate parameter values used to drive the system toward a desired state.

- Simplified dynamics used to establish equilibrium.

These differences are implemented through time-gated logic or by enabling full dynamics at 0.0.

Network or Structure Formation

In models with dynamic networks—households, partnerships, contact graphs—it is often desirable to allow the structure to stabilize before transmission begins. Burn-in provides a period during which relationships can form, dissolve, and equilibrate before infections are introduced or measurement begins.

Seeding and Equilibrium Targeting

Rather than assigning initial states arbitrarily, models may:

- Introduce infections gradually.

- Allow immunity to accumulate through prior exposure.

- Run until summary metrics stabilize before beginning analysis.

Negative time allows this process to occur within the same execution, without splitting the model into separate runs.

Delayed Reporting or Intervention

Often, the model’s full dynamics operate throughout burn-in, but outputs or interventions are suppressed until t >= 0.0. In this case, burn-in shapes the system state, while the analysis window determines what is recorded or evaluated.

Burn-in is therefore not a distinct modeling technique. It is a scheduling strategy that allows different behaviors to operate before and after a chosen time boundary on a single continuous timeline.

Handling Errors

Ixa generates errors using its IxaError type. In Ixa v2, this error type is intended only for errors Ixa itself

generates. For errors your own code produces, it is up to you to create your own error type or use a "universal" error

type crate like anyhow.

Summary

Use anyhow and its universal error type if:

- you want an easy, no-nonsense way to deal with errors with the least amount of effort

- you don't need to define your own error types or variants

Use thiserror to help you easily define your own error type if:

- you want to have your own error types / error enum variants

- you want control over how you manage errors in your model

Creating your own error types

You might want your own error type if you want to generate structured errors from your own code or want your own

structured error handling code. For example, you might want to have functions that return Result<U, V> to indicate

that they might fail:

fn get_itinerary(person_id: PersonId, context: &Context) -> Result<Itinerary, ModelError> {

// If we can't retrieve an itinerary for the given person, we return an error

// that gives information about what went wrong:

return Err(ModelError::NoItineraryForPerson);

}When you call this function, you can take more specific action based on what it returns:

match get_itinerary(person_id, context) {

Ok(itinerary) => {

/* Do something with the itinerary */

}

Err(ModelError::NoItineraryForPerson) => {

/* Handle the `NoItineraryForPerson` error */

}

Err(err) => {

/* A different error occurred; handle it in a different way */

}

}The thiserror crate reduces the boilerplate you have to write to

implement your own error types (an enum implementing the std::error::Error trait). In practice, model code often

needs to report different types of errors:

- errors defined by the model itself

- errors returned by Ixa APIs such as

context.add_report() - errors from other crates or from the standard library

That usually means your error enum contains a mix of your own variants and variants that wrap foreign error types. For example:

use ixa::error::IxaError;

use thiserror::Error;

#[derive(Error, Debug)]

pub enum ModelError {

#[error("model error: {0}")]

ModelError(String),

#[error("ixa error")]

IxaError(#[from] IxaError),

#[error("string error")]

StringError(#[from] std::string::FromUtf8Error),

#[error("parse int error")]

ParseIntError(#[from] std::num::ParseIntError),

#[error("ixa csv error")]

CsvError(#[from] ixa::csv::Error),

}thiserror automatically generates:

impl std::error::Error for ModelErrorimpl Display for ModelErrorFrom,Fromstd::string::FromUtf8Error,Fromstd::num::ParseIntError, andFromixa::csv::Error(because of#[from])source()wiring for error chaining

That last item is what lets one error wrap another: The std::error::Error

trait has a source(&self) -> Option<&(dyn Error + 'static)> method. When an

error returns another error from source(), it is saying "this error happened

because of that other error." Error reporters can then walk the chain and show

both the top-level message and the underlying cause.

You can implement all of this without the thiserror crate, but

thiserror saves you a lot of boilerplate. With

thiserror, you usually do not write source() yourself. A field

marked with #[from] or #[source] is treated as the underlying cause and returned from source() automatically.

#[from] also generates the corresponding From<...> impl, while #[source] only marks the wrapped error as the

cause.

This shows several useful patterns:

ModelError::ModelErroris a model-specific error variant that you define yourself.ModelError::IxaErrorwraps anyIxaErrorvariant, which is useful when Ixa code returns anIxaErrorand you want to propagate it as part of your model's error type.ModelError::CsvErrorwraps errors returned from the vendored CSV crate.ModelError::StringErrorandModelError::ParseIntErrorwrap standard library error types.

All of these wrapped variants participate in the standard error chain through source(). If one of your model

functions calls into Ixa or another library and that call fails, your model error can preserve the original cause while

still returning a single model-specific error type.

For example, if you want to add model-specific context around an IxaError instead of directly converting it with

#[from], you can write:

use ixa::error::IxaError;

use thiserror::Error;

#[derive(Error, Debug)]

pub enum ModelError {

#[error("failed to load itinerary for person {person_id}")]

LoadItinerary {

person_id: PersonId,

#[source]

source: IxaError,

},

}Now Display prints the outer message, while source() returns the inner